Request a good dwelling product for the weather conditions forecast, and it can take many seconds for the machine to answer. A single cause this latency occurs is mainly because connected devices don’t have enough memory or ability to store and run the tremendous device-understanding styles required for the device to fully grasp what a user is inquiring of it. The model is stored in a knowledge middle that may possibly be hundreds of miles away, in which the answer is computed and despatched to the unit.

MIT researchers have created a new technique for computing instantly on these units, which drastically cuts down this latency. Their technique shifts the memory-intensive techniques of working a device-learning product to a central server where factors of the product are encoded on to mild waves.

The waves are transmitted to a connected machine applying fiber optics, which enables tons of information to be despatched lightning-quick by a network. The receiver then employs a very simple optical unit that rapidly performs computations employing the sections of a design carried by these light-weight waves.

This method sales opportunities to a lot more than a hundredfold enhancement in vitality performance when when compared to other techniques. It could also enhance security, considering that a user’s data do not want to be transferred to a central area for computation.

This approach could permit a self-driving car or truck to make conclusions in genuine-time while using just a little share of the electricity now essential by ability-hungry pcs. It could also permit a user to have a latency-totally free dialogue with their sensible household unit, be utilised for live video clip processing above cellular networks, or even allow higher-velocity picture classification on a spacecraft tens of millions of miles from Earth.

“Every time you want to operate a neural community, you have to operate the plan, and how quickly you can operate the plan is dependent on how speedy you can pipe the software in from memory. Our pipe is significant — it corresponds to sending a total aspect-size motion picture over the online every millisecond or so. That is how fast data comes into our procedure. And it can compute as rapidly as that,” states senior creator Dirk Englund, an associate professor in the Division of Electrical Engineering and Computer system Science (EECS) and member of the MIT Investigation Laboratory of Electronics.

Becoming a member of Englund on the paper is direct creator and EECS grad pupil Alexander Sludds EECS grad scholar Saumil Bandyopadhyay, Investigation Scientist Ryan Hamerly, as very well as other folks from MIT, the MIT Lincoln Laboratory, and Nokia Corporation. The investigate is released these days in Science.

Lightening the load

Neural networks are device-discovering products that use levels of connected nodes, or neurons, to figure out designs in datasets and accomplish duties, like classifying illustrations or photos or recognizing speech. But these versions can contain billions of body weight parameters, which are numeric values that rework enter details as they are processed. These weights need to be stored in memory. At the same time, the information transformation procedure includes billions of algebraic computations, which need a great offer of electric power to complete.

The approach of fetching information (the weights of the neural network, in this case) from memory and moving them to the elements of a laptop that do the real computation is just one of the major limiting components to speed and vitality effectiveness, says Sludds.

“So our assumed was, why don’t we choose all that major lifting — the process of fetching billions of weights from memory — shift it absent from the edge gadget and place it someplace where by we have abundant access to power and memory, which offers us the skill to fetch people weights promptly?” he states.

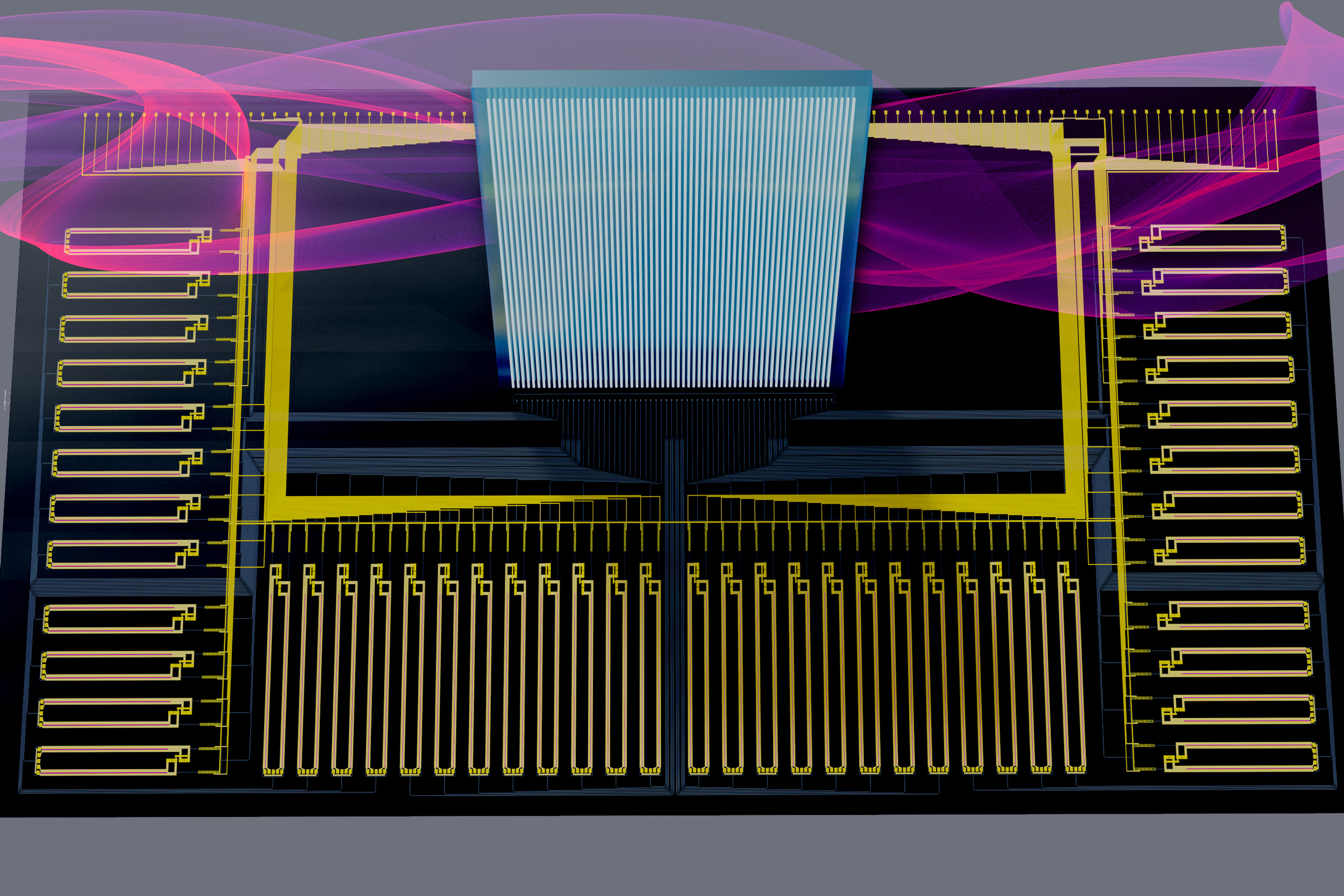

The neural network architecture they formulated, Netcast, involves storing weights in a central server that is related to a novel piece of hardware named a intelligent transceiver. This wise transceiver, a thumb-sized chip that can get and transmit info, employs technological know-how identified as silicon photonics to fetch trillions of weights from memory each next.

It gets weights as electrical signals and imprints them on to mild waves. Due to the fact the pounds details are encoded as bits (1s and 0s) the transceiver converts them by switching lasers a laser is turned on for a 1 and off for a . It brings together these light waves and then periodically transfers them by way of a fiber optic community so a shopper gadget does not want to question the server to receive them.

“Optics is great due to the fact there are lots of means to carry details in just optics. For instance, you can put information on different colors of gentle, and that permits a a great deal bigger details throughput and increased bandwidth than with electronics,” explains Bandyopadhyay.

Trillions per 2nd

The moment the light-weight waves get there at the client machine, a simple optical part regarded as a broadband “Mach-Zehnder” modulator works by using them to carry out super-speedy, analog computation. This will involve encoding enter data from the unit, these kinds of as sensor info, onto the weights. Then it sends every personal wavelength to a receiver that detects the mild and measures the consequence of the computation.

The scientists devised a way to use this modulator to do trillions of multiplications for each 2nd, which vastly will increase the speed of computation on the system while applying only a small volume of electricity.

“In purchase to make some thing faster, you have to have to make it extra electricity economical. But there is a trade-off. We’ve crafted a process that can work with about a milliwatt of electric power but however do trillions of multiplications for every next. In phrases of each velocity and electricity efficiency, that is a attain of orders of magnitude,” Sludds says.

They tested this architecture by sending weights more than an 86-kilometer fiber that connects their lab to MIT Lincoln Laboratory. Netcast enabled machine-finding out with higher accuracy — 98.7 percent for graphic classification and 98.8 per cent for digit recognition — at quick speeds.

“We had to do some calibration, but I was shocked by how very little do the job we experienced to do to realize this kind of large precision out of the box. We ended up capable to get commercially appropriate accuracy,” provides Hamerly.

Moving forward, the researchers want to iterate on the wise transceiver chip to accomplish even much better overall performance. They also want to miniaturize the receiver, which is currently the size of a shoe box, down to the dimension of a one chip so it could healthy on to a sensible system like a cell cellphone.

“Using photonics and light as a system for computing is a truly interesting spot of analysis with potentially big implications on the speed and effectiveness of our details know-how landscape,” suggests Euan Allen, a Royal Academy of Engineering Study Fellow at the University of Bathtub, who was not included with this get the job done. “The operate of Sludds et al. is an thrilling action toward seeing serious-world implementations of these kinds of units, introducing a new and useful edge-computing scheme although also checking out some of the elementary limitations of computation at extremely small (single-photon) gentle stages.”

The study is funded, in component, by NTT Analysis, the Countrywide Science Basis, the Air Force Business of Scientific Investigate, the Air Power Investigate Laboratory, and the Army Research Business.

You may also like

Archives

- December 2024

- November 2024

- September 2024

- August 2024

- July 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

Calendar

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | |

| 7 | 8 | 9 | 10 | 11 | 12 | 13 |

| 14 | 15 | 16 | 17 | 18 | 19 | 20 |

| 21 | 22 | 23 | 24 | 25 | 26 | 27 |

| 28 | 29 | 30 | 31 | |||